The function and definition of collateral and liquidity optimization has continued to expand from its roots in the early 2000s. Practitioners must now consider the application of connected data on security holders to operationalize the next level of efficiency in balance sheet management. A guest post from Transcend.

The concept of connected data, or metadata, in financial markets can sound like a new age philosophy, but really refers to the description of security holdings and agreements that together deliver an understanding of what collateral must be received and delivered, where it originated and how it must be considered on the balance sheet. This information is not available from simply observing the quantity and price of the security in a portfolio. Rather, connected data is an important wrapper for information that is too complex to show in a simple spreadsheet.

Earlier days of optimization meant ordering best to deliver collateral in a list, or creating algorithms based on Credit Support Annexes and collateral schedules. These were effective tools in their day and were appropriate for the level of balance sheet expertise and technology at hand; some were in fact quite advanced. These techniques enabled banks and buy-side firms to take advantage of best pricing in the marketplace for collateral assets that could be lent to internal or external counterparties. Many of these techniques are still in use today. While they deliver on what they were designed for, they are fast becoming outmoded. Consequently, firms relying on these methodologies struggle to drive further increases in balance sheet efficiency, and in order to maintain financial performance targets may need to charge higher prices. This is not a sustainable strategy.

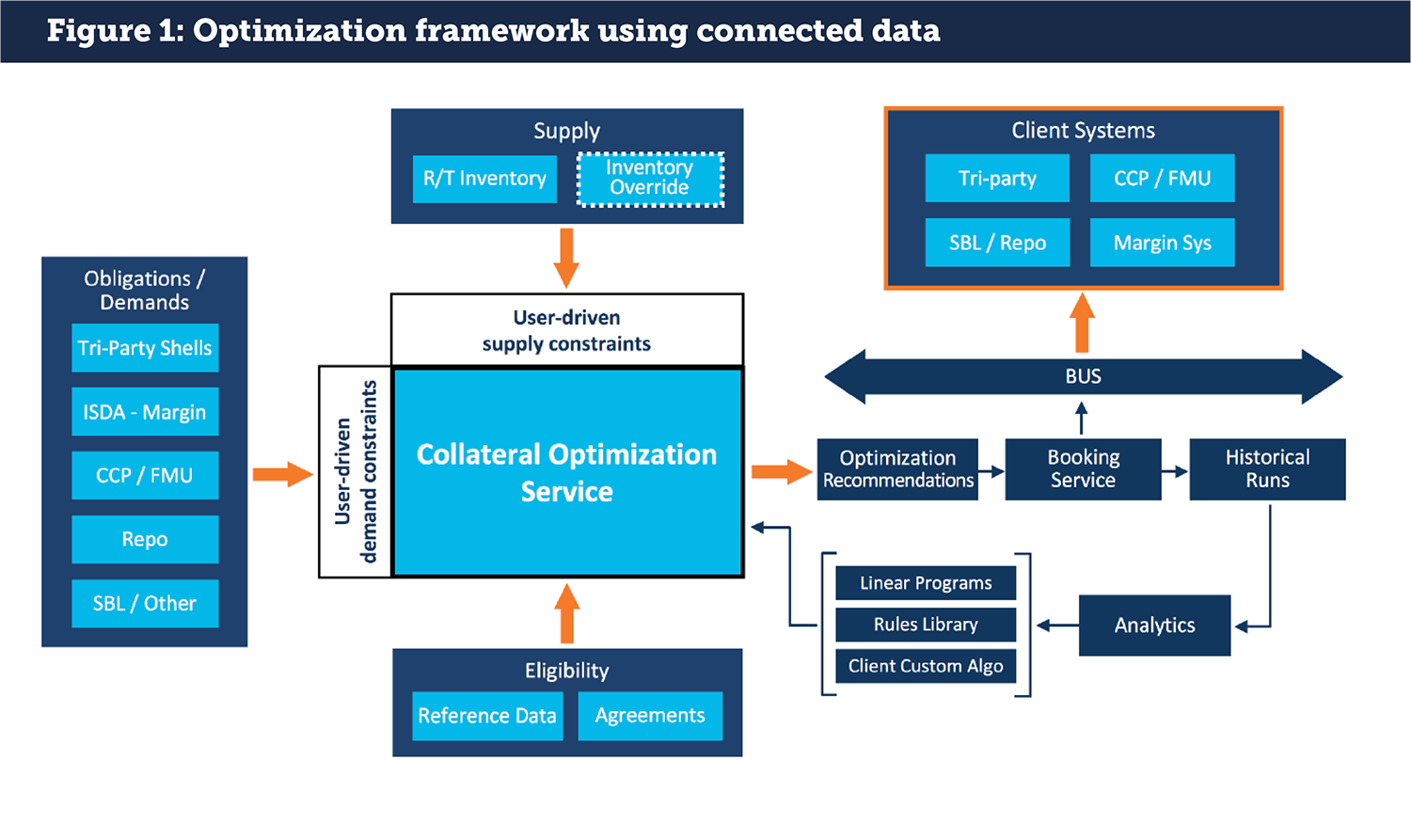

The next level of collateral optimization considers connected data in collateral calculations. Interest is being driven by better technology that can more precisely track financial performance in real time. A finely tuned understanding of the nature of the individual positions and how they impact the firm can in turn mandate a new kind of collateral optimization methodology that structures cheapest to deliver based on a combination of performance impacting factors and market pricing. This gives a new meaning to “best collateral” for any given margin requirement. This only becomes possible when connected data is integrated into the collateral optimization platform.

As an example of applying connected data, not all equities are the same on a balance sheet. A client position that must be funded has one implication while a firm position has another. Both bring a funding and liquidity cost. A firm long delivered against a customer short is internalization, which has a specific balance sheet impact. Depending on balance sheet liquidity, this impact may need additional capital to maintain. Likewise, an expected tenor of a position will impact liquidity treatments. A decision to retain or host these different assets as collateral can in turn feedback to Liquidity Coverage Ratio, Leverage Ratio and other metrics for internal and external consumption.

If these impacts can be observed in real-time, the firm may find that internalizing the position reduces balance sheet but is sub-optimal compared to borrowing the collateral externally. This of course carries its own funding and capital charges, along with counterparty credit limits and risk weightings in the bilateral market. These could in turn be balanced by repo-ing out the firm position, and by tenor matching collateral liabilities in line with the Liquidity Coverage Ratio and future Net Stable Funding Ratio requirements. Anyone familiar with balance sheet calculations will see that these overlapping and potentially conflicting objectives may result in decisions that increase or decrease costs depending on the outcome. By understanding the connected data of each position, including available assets and what needs to be funded, firms can make the best possible decision in collateral utilization. Importantly, the end result is to reduce slippage, increase efficiency, and ultimately deliver greater revenues and better client pricing based on smarter balance sheet management.

Another way to look at the new view of collateral optimization is as the second derivative. The first derivative was the ordering of lists or observation of collateral schedules. The next generation incorporates connected data across collateral holdings and requirements for a more granular understanding of what collateral needs to be delivered and where, and how this will impact the balance sheet and funding costs. It has taken some time to build the technology and an internal perspective, but firms are now ready to engage in this next level of collateral sophistication.

Implementing technology for connected data in collateral and liquidity

A connected data framework starts with assessing what data is available and what needs to be tagged for informing the next level of information about collateral holdings. This process is achievable only with a scalable technology solution: it is not possible to manage this level of information manually let alone for real time decision making. Building out a technology platform requires careful consideration of the end to end use case. If firms get this part right, they can succeed in building out a connected data ecosystem.

The connected data project also requires access to a wide range of data sources. Advances in technology have allowed data to be captured and presented to traders, regulators, and credit and operations teams. But right now, most data are fragmented, looking more like spaghetti than a coherent picture of activity across the organization. To be effective, data needs to flow from the original sources and be readable by each system in a fully automated way.

Once a usable, tagged data set has been established, it can then be applied to collateral optimization and actionable results. This can include what-if scenarios, algorithmic trading, workflow management, and further to areas like transfer pricing analytics. Assessing and organizing the data, then tagging it appropriately, can yield broad-ranging results.

Building out the collateral mindset

An evolution in the practice of collateral optimization requires a more holistic view of what collateral is supposed to do for the firm and how to get there. This is a complex cultural challenge and is part of an ongoing evolution in capital markets about the role of the firm, digitization and how services are delivered. While difficult to track, market participants can qualitatively point to differences in how they and their peers think about collateral today versus five years ago. The further the past distance, the greater the change, which naturally suggests challenges when looking at a possible future state.

An important element to developing scalable collateral thinking is the application of technology; our observation is that technology and thinking about how the technology can be applied go hand-in-hand. As each new wave of technology is introduced, new possibilities emerge to think about balance sheet efficiency and also how services are delivered both internally and to clients. In solving these challenges for our clients using our technology, it is evident that a new vision is required before a technology roadmap can be designed or implemented.

The application of connected data for the collateral market is one such point of evolution. Before connected data were available on a digitized basis, collateral desks relied either on ordered lists or individual/manual understandings of which positions were available for which purposes. There was no conversation about the balance sheet except in general terms. Now however, standardized connected data means that every trading desk, operations team and balance sheet decision maker can refine options for what collateral to deliver based on the best balance sheet outcome in near real-time. New scenarios can be run that were never possible, and pricing for clients can be obtained in time spans that used to take hours if not a day or more.

Now that collateral optimization based on connected data is available, this requires firms to think about what services they can deliver to clients on an automated basis, and what should be bundled and what should be kept disaggregated. As new competitors loom in both the retail and institutional space, these sorts of conversations driven by technology and collateral become critical to the future of the business. Connected data is leading the way.

This article was originally published on Securities Finance Monitor.